TABLE OF CONTENTS

Building Platforms for the Next Decade

At Klika Tech, we believe that the most exciting breakthroughs happen when the physical and digital worlds meet. As an AWS Premier Tier Partner, we’ve been building a new generation of intelligent systems designed to connect large fleets of devices with highly scalable cloud services. The result will not be just another connected platform; it will be a living system able to sense its environment, reason about changing conditions, and act with a degree of autonomy that pushes beyond traditional automation.

This work has taken on new momentum through AWS’s Generative AI Accelerator Program, a strategic initiative that provides assessment frameworks, proof-of-concept funding, and access to cutting-edge AI services. This partnership is enabling us to transform theoretical possibilities into production-ready autonomous systems.

From the earliest design discussions, the ambition was clear: create architectures that combine the reliability of industrial systems with the elasticity and intelligence of modern cloud computing. We needed platforms that could adapt to unpredictable connectivity, operate securely at massive scale and, above all, learn and improve over time without constant human intervention.

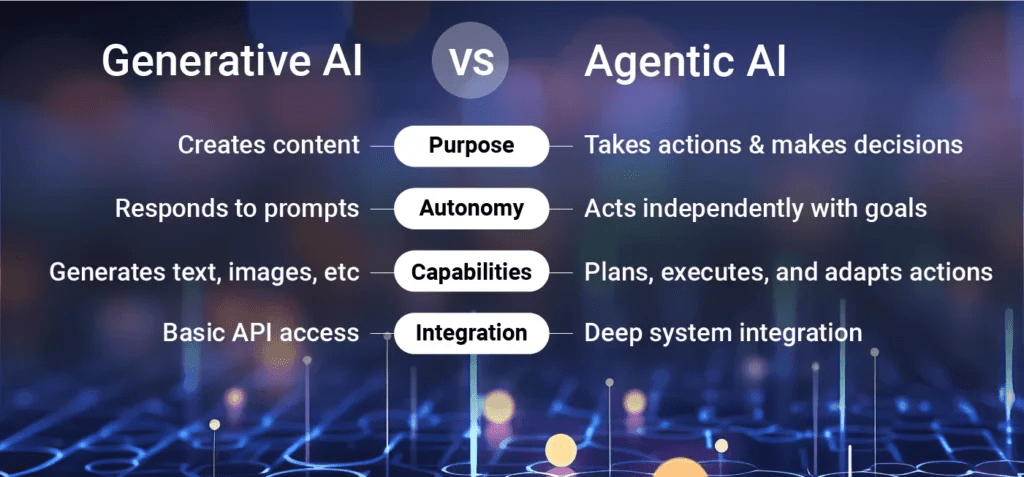

This journey is taking us deep into what is now called Agentic AI, systems composed of autonomous agents that perceive their environment, reason about complex situations, and take action to achieve defined objectives. Unlike traditional automation that follows predetermined rules, these agents maintain their own understanding of the world, formulate plans dynamically, and adapt their behavior as situations evolve.

From Reactive Systems to Autonomous Agents

The shift from reactive to proactive intelligence represents a fundamental change in how we architect enterprise systems. Traditional automation excels at routine tasks: if condition A occurs, execute action B. But modern operational challenges are rarely that predictable. Equipment behaves in unexpected ways, users make suboptimal decisions, and optimal outcomes depend on synthesizing information from dozens of sources in real-time.

Building truly autonomous systems requires rethinking the entire architecture. An agentic system doesn’t just detect an anomaly and alert someone, it reasons about the probable cause, evaluates potential interventions, coordinates with other systems, and takes corrective action while learning from the outcome to improve future responses.

The architecture comprises several interconnected layers:

Perception modules continuously ingest and interpret data from diverse sources, IoT sensors, enterprise databases, external APIs, and real-time event streams. These modules actively contextualize information, filtering noise and identifying what’s relevant to the agent’s objectives. At the edge, perception happens in real-time with minimal latency. In the cloud, perception integrates information across entire fleets to identify system-wide patterns.

Reasoning engines form the cognitive core. They maintain knowledge graphs that represent relationships between assets, conditions, and outcomes. They apply logical inference to understand causality and increasingly leverage large language models to reason about ambiguous situations that rigid rule-based systems struggle to handle.

Planning and decision layers translate high-level goals into actionable sequences. For complex tasks, planning may involve multiple agents collaborating; one focused on diagnostics, another on scheduling interventions, and a third on resource allocation.

Action execution interfaces bridge the digital and physical worlds. They send commands to equipment, update records, trigger workflows, and communicate with stakeholders. Every action is logged, creating an audit trail that supports both debugging and compliance.

Memory and learning components ensure agents improve over time. Vector databases store experiences enabling contextual retrieval. When an agent encounters a similar situation, it can draw on that experience.

Engineering the Cloud-Edge Continuum with AWS

One of our key architectural insights is that the most capable autonomous systems distribute intelligence across the cloud-edge continuum based on latency requirements, bandwidth constraints, and reliability needs.

Cloud intelligence provides the backbone where distributed services ingest continuous telemetry from thousands of edge devices. We chose Amazon Web Services as our foundation, engineering a tightly integrated environment that balances performance, flexibility and cost.

AWS IoT Core provides the secure communication layer, giving each device a unique identity and ensuring authenticated, encrypted messaging. Event-driven computing with AWS Lambda allows us to react instantly to changes without maintaining large clusters. For long running microservices and custom orchestration logic, we rely on Amazon ECS, which provides container orchestration with operational maturity.

A custom rules engine sits at the heart of the data flow, continuously evaluating incoming streams, classifying states, and triggering automated actions. Visual insight is delivered through interactive dashboards that surface live operational metrics and historical trends.

Edge intelligence is where immediate action must occur. Advanced controllers collect and preprocess high-frequency sensor data, applying early-stage analytics before anything reaches the cloud. By detecting anomalies and filtering noise close to the source, we reduce network load dramatically.

AWS IoT Greengrass plays a crucial role by extending cloud capabilities to the very edge. It allows selected workloads, such as data filtering, first-line anomaly detection, and even agent reasoning, to run directly on embedded controllers. This means that even if connectivity is interrupted, the system continues functioning autonomously. Decisions can be taken locally, and only the most relevant data is sent upstream.

The Generative AI Frontier: Real Challenges, Real Solutions

With a secure and scalable foundation in place, we are now pushing into the next frontier through our work with AWS’s Generative AI Accelerator Program. This initiative is helping us address real operational challenges with autonomous AI solutions.

Through structured workshops and assessments, we’ve identified several high-value use cases where agentic AI can transform operations:

Intelligent Troubleshooting Agents: developing agents that analyze telemetry data from connected devices to automatically identify malfunction causes. Rather than waiting for support tickets, these agents will detect issues proactively, consult documentation and historical data, create tickets automatically, and even suggest or implement remediation steps. This moves from reactive support to predictive maintenance.

Behavioral Pattern Analysis and User Education: One of our most promising initiatives will address a common challenge; users making suboptimal decisions that undermine system performance. By building agents that analyze usage patterns, identify behaviors that reduce efficiency, and proactively deliver personalized educational suggestions. The goal isn’t just automation, it’s building user trust through transparent, explainable recommendations that help people understand and optimize their interactions with complex systems.

Anomaly Detection at Scale: With millions of daily telemetry messages and fault reports, human analysis becomes impractical. By developing agentic systems that process these massive data volumes, detect anomalies in real-time, and take appropriate actions, whether that’s sending commands to devices, notifying operators, or escalating to human review based on confidence levels and severity.

“Agentic AI represents a shift in the software development paradigm. It allows the systems to embrace uncertainty that is inherent in the real world and create software that truly adapts to everchanging tasks. It fills the niche not covered before, giving developers the means to cover both static and dynamic processes.

Working in a new field with little prior knowledge, developers of agentic systems need robust and flexible tools that support any system they envision without tying their hands. AWS offerings fill this role perfectly, giving a wide selection of models through their Amazon Bedrock, providing agentic infrastructure with their Amazon Bedrock AgentCore, and offering a framework to actually write the code with their Strands Agents.

Being inherently stochastic, agents are not the silver bullet. The main challenge is how to guide them and constrain them to be reliable, safe and secure, with an emphasis on security. Understanding how to safely provide external context to gullible models is, unfortunately, still an unsolved critical problem.

However, it doesn’t mean that properly constrained agents can’t be built right now. In the IoT sphere, I see a lot of customer-facing and maintenance tasks increasingly delegated to agents.”

By: Konstantin Meshcheryakov

Head of AI Competency, Klika Tech

AWS Bedrock is central to these initiatives. We’re leveraging Claude Sonnet 4.5 for its superior factual consistency in user-facing generation tasks, critical when providing recommendations involving cost savings or operational decisions. For analytical tasks, we’re exploring Amazon Nova Pro to create hybrid agentic workflows that combine generation with deep data analysis.

The technical approach emphasises factuality and explainability. Agents are strictly grounded on structured data passed directly into prompt contexts, minimising hallucination risks. By developing “golden datasets” for few-shot learning, we can ensure agents provide relevant, helpful guidance based on proven patterns. Amazon Bedrock Knowledge Bases could provide RAG capabilities, allowing agents to ground recommendations in documentation and historical knowledge.

Perhaps most importantly, these agents will explain themselves. When making significant decisions, they generate natural-language justifications that help operators understand reasoning, build trust, and override when appropriate. This transparency is crucial for regulated environments where blind trust in autonomous decisions isn’t acceptable.

Technical Challenges We’re Solving

Building autonomous systems at scale introduces several technical hurdles we’re actively addressing:

Data fidelity and contextual understanding prove more challenging than anticipated. Autonomous agents making consequential decisions require high-quality, real-time information. We’re investing heavily in data validation pipelines, anomaly detection for sensor streams, and comprehensive knowledge bases.

Factuality constraints are paramount. When agents provide financial recommendations or operational guidance, accuracy isn’t optional, it’s essential. Our technical architecture strictly grounds models on validated data, with mandatory subject matter expert review processes built into development workflows.

Multi-agent orchestration becomes critical as systems scale. We’re developing orchestration frameworks using AWS Step Functions and Amazon Bedrock AgentCore to manage agent lifecycles, facilitate inter-agent communication through robust messaging queues, and prevent conflicts or redundancies.

Security and trust take on new dimensions with autonomous systems. Our defence-in-depth model includes secure device provisioning, role-based access controls for agent actions, continuous monitoring of agent behaviour, and automated response to anomalies. Every agent action is logged and auditable.

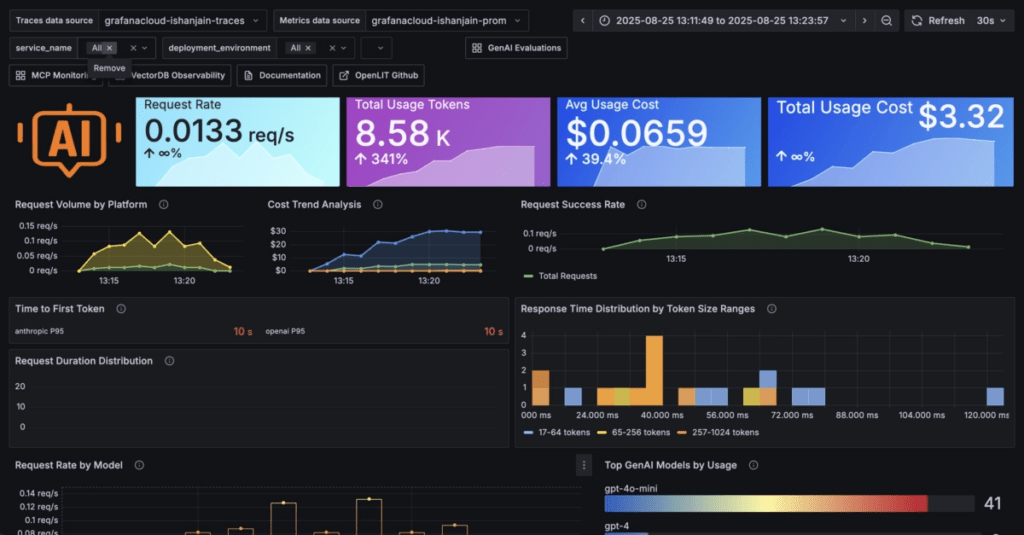

Observability and explainability are non-negotiable. We’re building comprehensive monitoring with Amazon CloudWatch and OpenTelemetry integration, capturing distributed traces that show how information flows through agent perception, reasoning, and action modules. Specialised dashboards visualise agent state, decision trees, and confidence levels.

Lessons in Building for the Future

Our work with AWS has reinforced several principles that now guide our approach:

Modularity is essential. We treat models and agents as independently deployable services that can be updated, tested, and rolled back without destabilizing entire systems.

Infrastructure as Code enables scalability. Using AWS CloudFormation ensures the same architecture supporting pilot deployments can expand to tens of thousands of devices without a fundamental redesign.

DevOps and MLOps are inseparable. We’re implementing automated CI/CD pipelines with MLOps practices, versioning models, testing in staging environments, canary releases, and automated retraining to ensure agents evolve reliably.

Phased implementation mitigates risk. Our approach with AWS starts with proof-of-concepts that validate technical feasibility and business value before progressing to full production systems. This incremental strategy ensures each phase builds on proven foundations.

Cross-functional collaboration drives success. Subject matter experts, cloud architects, data scientists, and AI engineers must work side by side, validating outputs and ensuring solutions address real operational needs.

Real-World Impact and the Path Forward

The convergence of edge intelligence, cloud orchestration, and agentic AI isn’t theoretical, it’s delivering measurable value today while pointing toward even greater possibilities tomorrow.

These systems don’t just improve efficiency, they enable entirely new operational models. When assets become self-managing and predictive, they transform from capital investments into intelligent services. When operations become autonomous, organisations scale without proportional increases in staff. When systems continuously learn, they compound competitive advantage over time.

Our partnership with AWS accelerates this vision. Through their Generative AI Accelerator Program, we’re not just accessing cutting-edge technology, we’re gaining structured frameworks for assessment, proof-of-concept funding, and technical guidance. This enables us to move faster from concept to production, validating approaches and building confidence before major investments.

The most exciting aspect isn’t the technology itself, it’s what the technology enables. Autonomous systems that free human experts from routine analysis so they can focus on strategic challenges. Operations that continue reliably even when faced with unexpected disruptions. Businesses that adapt intelligently to changing conditions rather than just grow larger.

Conclusion: Engineering Intelligence That Adapts

Klika Tech’s work with AWS demonstrates what becomes possible when cloud scalability, edge intelligence, and emerging AI are brought together with strategic partnership and structured methodology. It shows that with the right engineering discipline and the right partner, we can build systems that are secure, resilient, and capable of evolving toward true autonomy.

As we continue developing predictive analytics, generative AI agents, and multi-agent coordination systems, the boundary between human oversight and machine agency will keep shifting. Our goal is not to replace human decision-making but to augment it, giving operators tools that understand context, anticipate problems, and recommend or execute optimal actions.

The autonomous enterprise is not in the distant future, it’s being built today by organizations willing to invest in foundational capabilities and strategic partnerships that make true autonomy possible. Through our collaboration with AWS, we’re hoping to help shape a future where connected systems are not just smart but truly autonomous, and where technology works alongside people to create safer, more efficient, and more adaptive environments.

Resources

By: Mohammad Shirazi, Portfolio Manager, Klika Tech